Hi there, nice to meet you!

My name is Donghu Kim. Currently I am a research scientist at Holiday Robotics, where we are developing dexterous manipulation skills with RL in simulation.

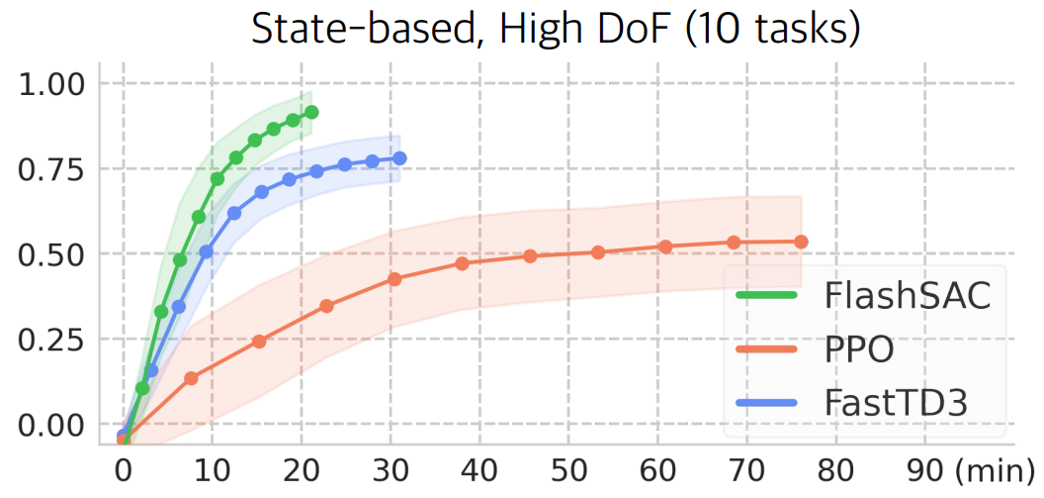

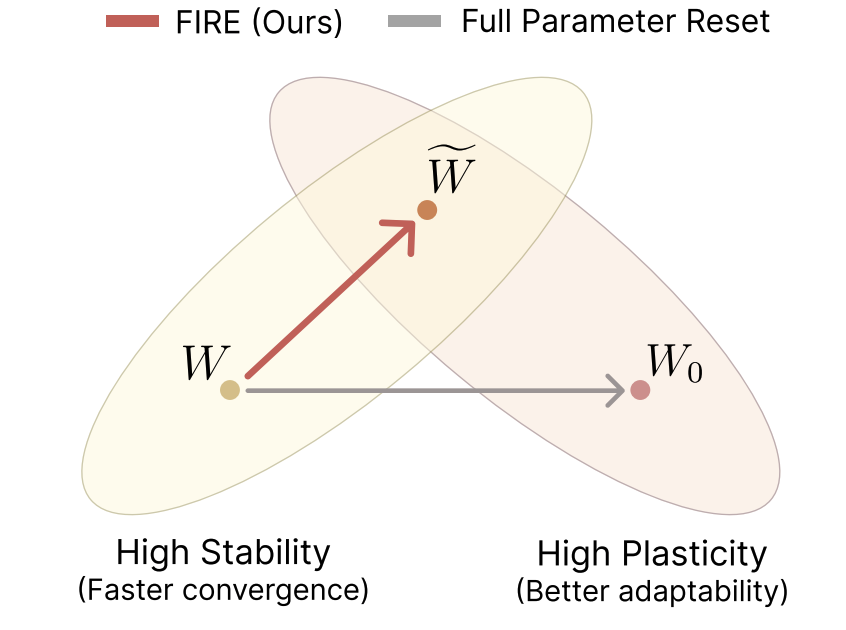

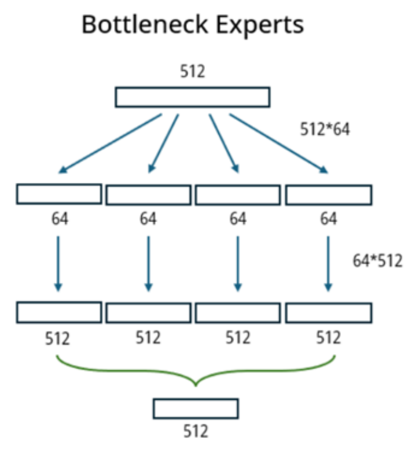

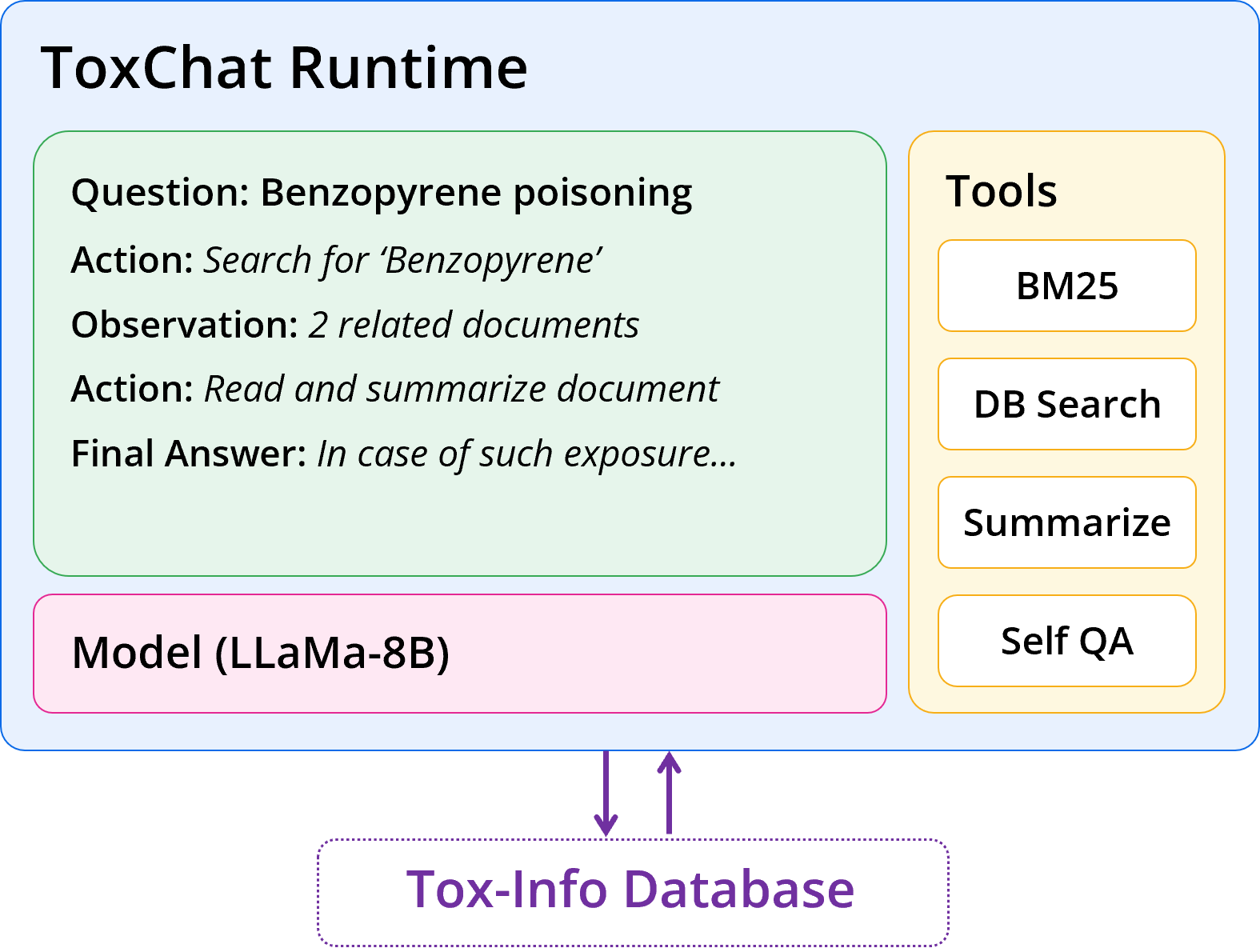

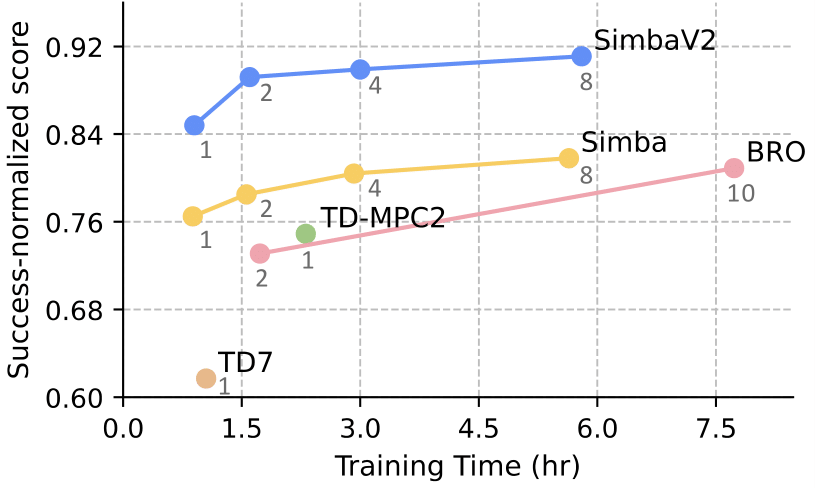

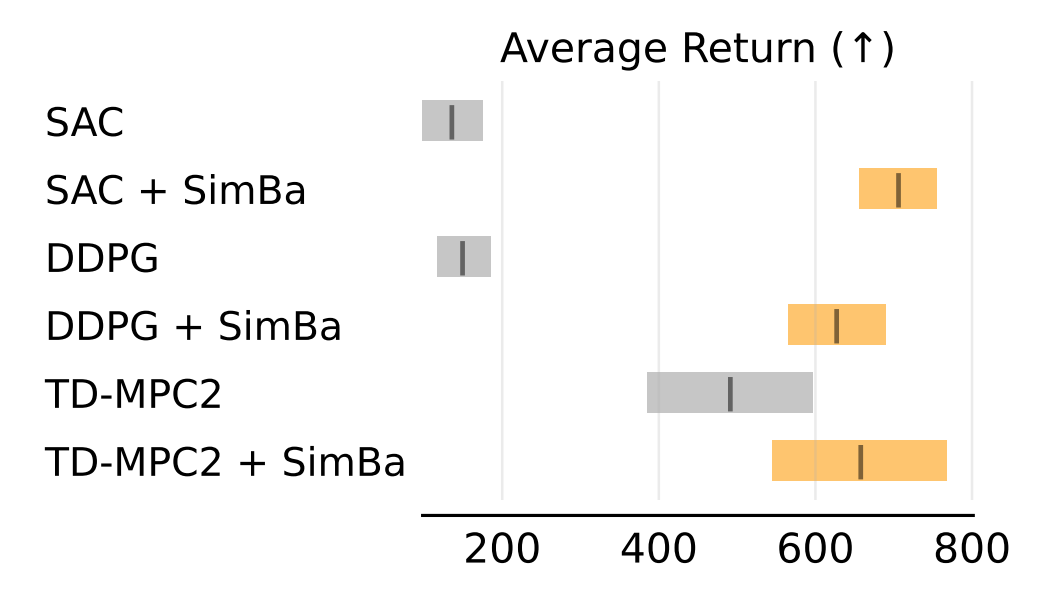

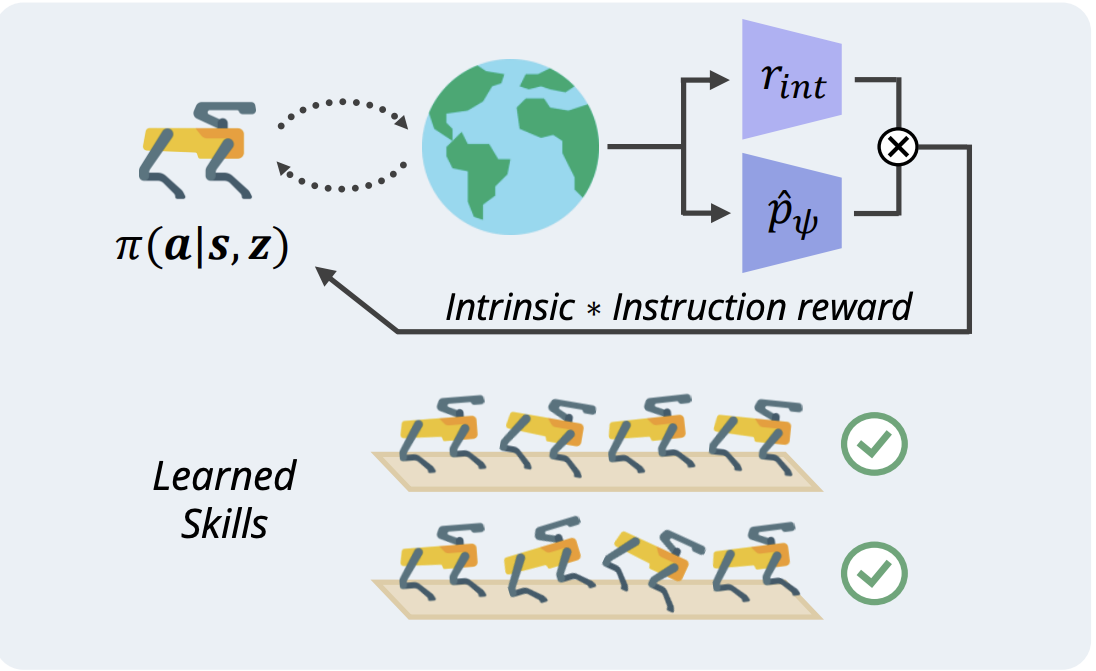

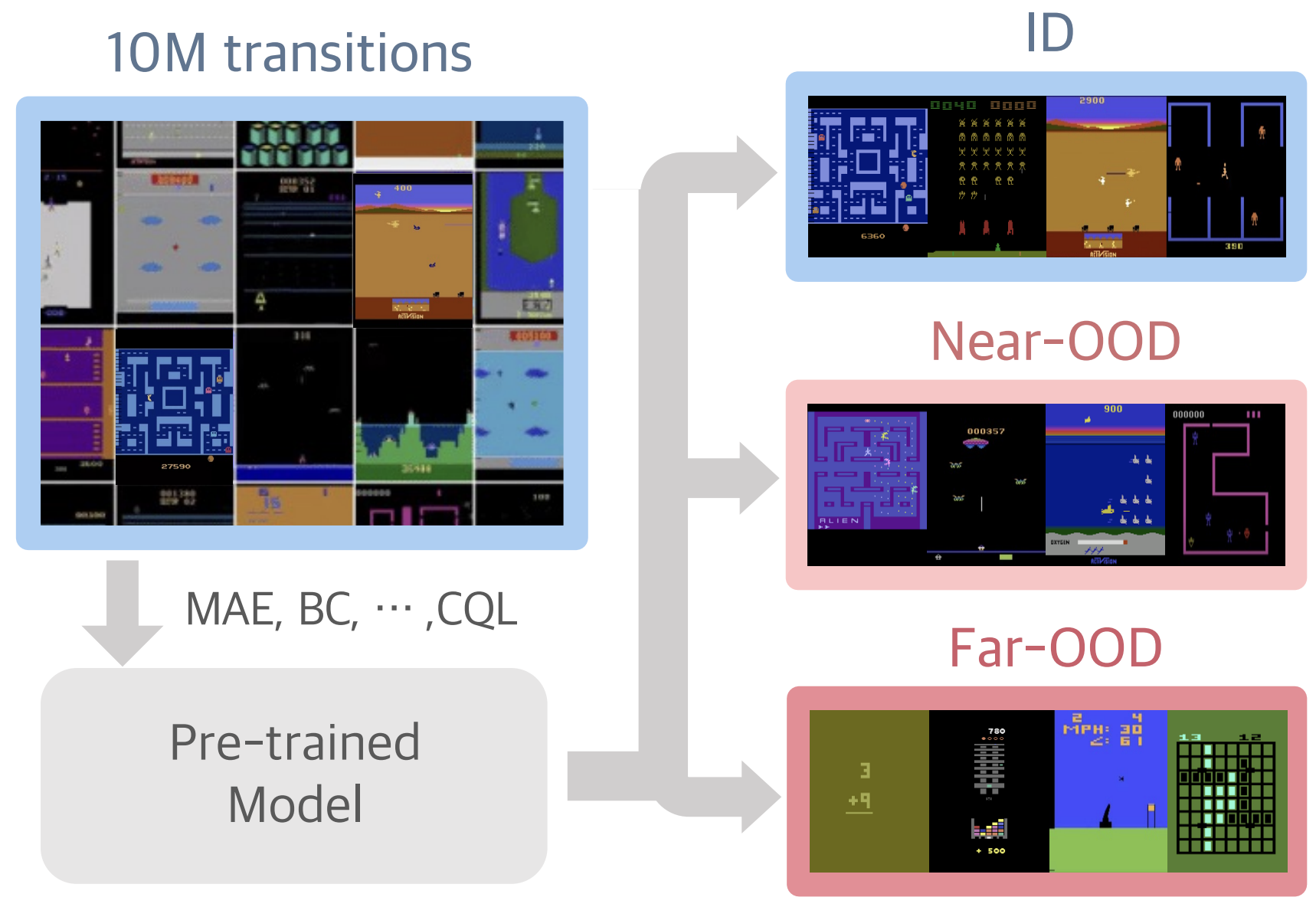

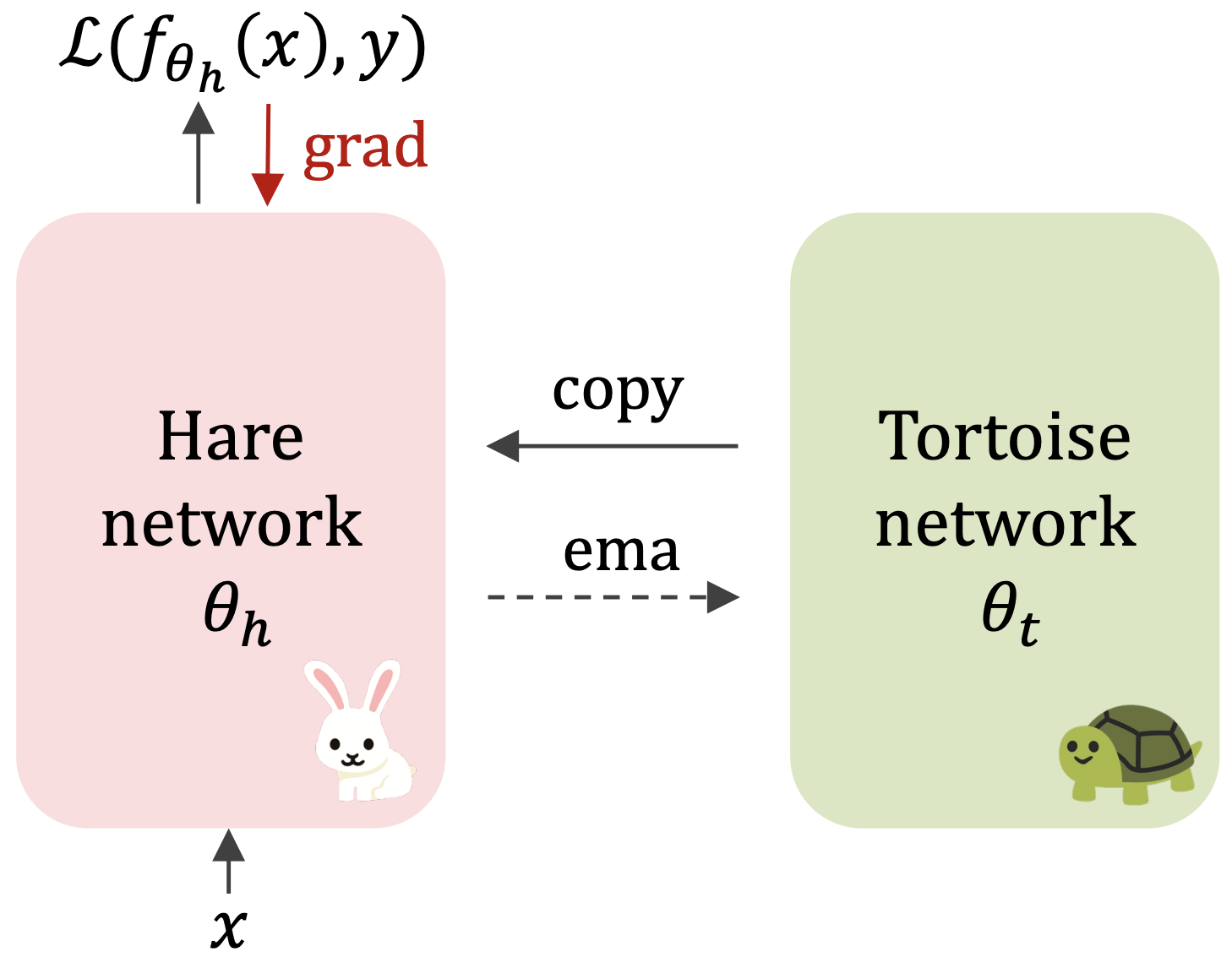

I received my Master's Degree in KAIST (advised by Jaegul Choo). My study is based on the belief that RL in simulated environments is inevitable, let it be low-level data generation [1, 2] or building a library of atomic skillsets [3]. In this direction, I am invested in pushing the absolute limit of efficiency in RL for control: Can we make RL work with only 1K samples? Can we do it within an hour? As far fetched as the goal may seem, there are so many exciting components we can tackle, including feature learning, exploration, architecture design, optimizer.

I still have a long long way to go; if you want to discuss anything research related, I'd be more than happy to be engaged!

Email / CV / Google Scholar / LinkedIn / Github